GSA SER Link Lists

The Backbone of Automated Link Building

In the world of search engine optimization, efficiency and scale often separate casual efforts from serious campaigns. For those operating in competitive niches, automating repetitive tasks isn't a luxury – it's a necessity. At the center of this automation ecosystem sits GSA Search Engine Ranker, a tool infamous for its ability to build thousands of backlinks on autopilot. However, the engine is only as powerful as its fuel. That fuel comes in the form of GSA SER link lists, curated collections of target URLs that dictate where your backlinks will be placed.

Understanding the Role of Link Lists

A fresh installation of GSA SER includes a set of default platforms and some built-in search engines for scraping targets, but experienced users quickly discover that the real leverage lies in imported lists. These are not simple text files of random domains. High-quality GSA SER link lists are carefully harvested, filtered, and verified collections of URLs that accept various types of submissions – blog comments, guestbooks, forums, trackbacks, article directories, and social bookmarks. Without a steady supply of fresh, alive targets, your campaigns will slow to a crawl, wasting resources on failed submissions and duplicate content detection.

Types of Targets You’ll Encounter

Not all links are created equal, and neither are the lists. A comprehensive collection typically segments URLs by engine type. You might find dedicated lists for:

- Blog Comments: WordPress, Drupal, and custom CMS platforms that allow open commenting.

- Guestbooks: Older but still functional platforms that offer easy insertion points.

- Trackbacks: Pingable URLs that can generate contextual backlinks when configured correctly.

- Article Directories: Sites where spun content can be published with author bio links.

- Social Networks: Micro-blogs, profiles pages, and sharing sites that create profile backlinks.

Advanced users often merge multiple GSA SER link lists together, deduplicate them, and run them through a custom verification script before feeding them into a campaign. This ensures that each imported line is an actual submission form, not a dead page or a homepage with no posting functionality.

Why Freshness Is Non-Negotiable

The web is decaying constantly. Domains expire, comment forms are closed, sites migrate to HTTPS and break old submission paths. A link list that was thriving six months ago can be virtually useless today. Wasting software threads on 404 errors and timeouts directly impacts your verification rate and final link count. This is why top-tier providers of GSA SER link lists update their inventories weekly or even daily. They use scraping tools that comb search engines using footprints like "powered by wordpress" + "leave a comment" and then test each candidate live. Automation of the list-building process itself has become a sub-industry.

Footprints: The Language of Target Discovery

Building your own lists requires understanding how to craft search queries that reveal specific platforms. A typical footprint for finding guestbook targets might include strings like “add entry†“guestbook†“name:†“email:â€. For blog comments, footprints like “leave a reply†“your email address will not be published†are part of the collector’s toolkit. When you buy or download custom GSA SER link lists, you're essentially paying for someone else's expertise in running these complex scraping operations at scale, often across multiple search engine APIs and proxies.

Filtering for Quality, Not Just Quantity

Any software can import a million URLs. The real art is in the filtering. A saturated server log filled with failed submissions provides no SEO value. Effective filtering starts with metrics that matter:

- Obligatory NoFollow: Many lists tag URLs with the nofollow attribute status, giving you the choice to include or exclude them based on your risk tolerance.

- Domain Authority Thresholds: Modern GSA SER link lists sometimes come pre-scored, allowing you to import only targets above a certain DA or DR, cutting out pure spam zones.

- Outbound Link Count: A blog page with 500 external links in its comments section is a red flag. Advanced filters prune those before they reach your campaign.

- Language and Country: If your main site is in English, you don't need French or Russian targets unless you're building links for a specific geo-campaign.

Custom Lists vs. Public Scrapes

You’ll find two main classes of GSA SER link lists in circulation. Public scrapes are widely shared, often downloaded from forums or SEO groups. While they can be a decent starting point for testing, their footprint is well-known, and many of those URLs are already burned – blacklisted or spammed to death. Custom, private lists are built by solo operators or small teams who use residential proxies, rotating user agents, and proprietary checks to maintain a high percentage of virgin targets. They cost more, but the ratio of verified links per import is dramatically higher. If you value your server resources and time, investing in a reputable private source often yields a better return than clicking through thousands of wasted domains from a free share.

Integrating Lists into Your Workflow

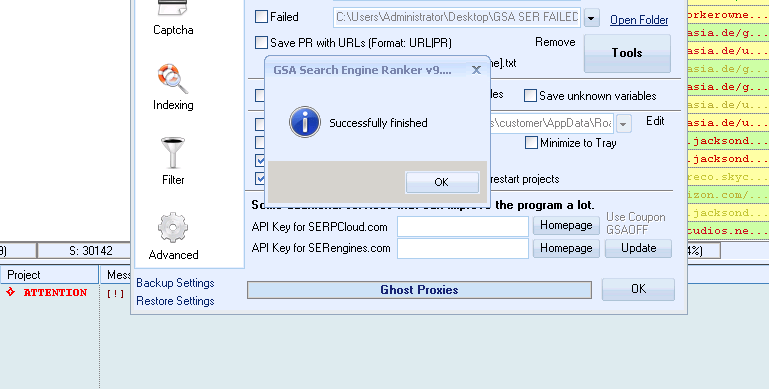

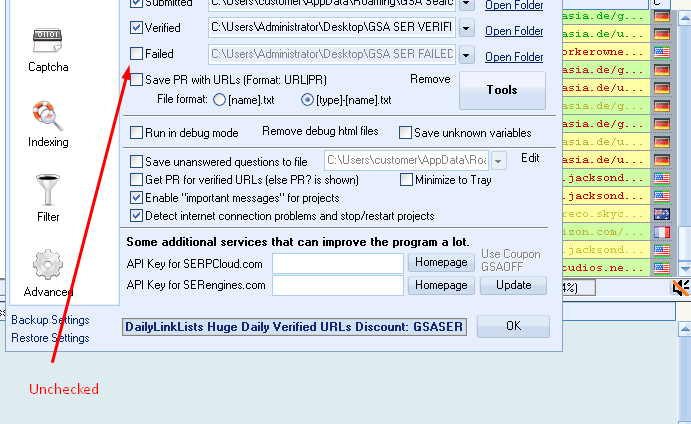

Once you have a reliable set, the process is straightforward. In GSA SER, you navigate to the project options and select the “Tools†menu to import URLs. But the success of GSA SER link lists isn't just about the import; it's about the campaign settings that process them. You need to balance the number of active threads, the pause between submissions, and the captcha solving service. Even the best list will produce bans if you attack each server too aggressively. Savvy operators use randomization – rotating through different list segments for each project so that no single domain gets hit with identical patterns repeatedly.

Maintaining and Verifying Over Time

Your initial import might contain 50,000 targets. After a week, perhaps 15,000 have accepted a link successfully. The remaining 35,000 didn’t fail because of the list; they failed because of platform-specific issues like Ajax forms, login requirements, or aggressive spam filters. The key to a sustainable system is to re-verify your imported GSA SER link lists outside of GSA, using a dedicated link checker. This tool pings the URL, checks for the presence of a submission form or your target anchor text, and exports a cleaned version. By feeding this cleaned list back into new campaigns, you stop bleeding proxy bandwidth and threads on dead ends.

Ethical Considerations and Link Risk

No discussion of automated link lists is complete without acknowledging the stark reality: Google considers most automatically placed links to be spam. A site hit with a manual action due to “unnatural links†usually has a backlink profile flushed check here with the kind of submissions that generic GSA SER link lists produce. This doesn't make the tool or the lists inherently bad, but it demands a strategic shift. The modern approach is to use these lists for tier-2 and tier-3 link building – never pointing them directly at your money site. They can boost the authority of your buffer properties, like social profiles, Web 2.0 blogs, and wikis, which then link to your main domain in a more controlled, high-quality manner. Treat the lists as dirt to build foundations, not as the façade of your house.

The Future of Automated Targets

The arms race continues. As search engines get better at detecting scraped footprints, the providers of GSA SER link lists are moving toward contextual discovery. Instead of relying on Google dorks, they are parsing sitemaps, RSS feeds, and newly registered domains in real-time. AI is beginning to play a role in identifying submission forms visually, bypassing the footprint entirely. The lists of tomorrow will be dynamic, self-updating streams rather than static .txt files. But the core principle remains unchanged: the quality of your input dictates the output. Without a meticulously maintained, filtered, and ethically tiered collection of targets, even the most powerful automation software becomes an expensive paperweight.

In the end, GSA SER link lists are a tool multiplier. They transform a capable piece of software into a relentless link acquisition machine. Yet they require respect. Blindly importing everything with an open commenting function is the fastest path to a penalty. Shield your main assets, constantly prune your sources, and treat every URL as ephemeral. The search landscape rewards those who combine automation with intelligence, and that balance begins with the lists you choose to trust.